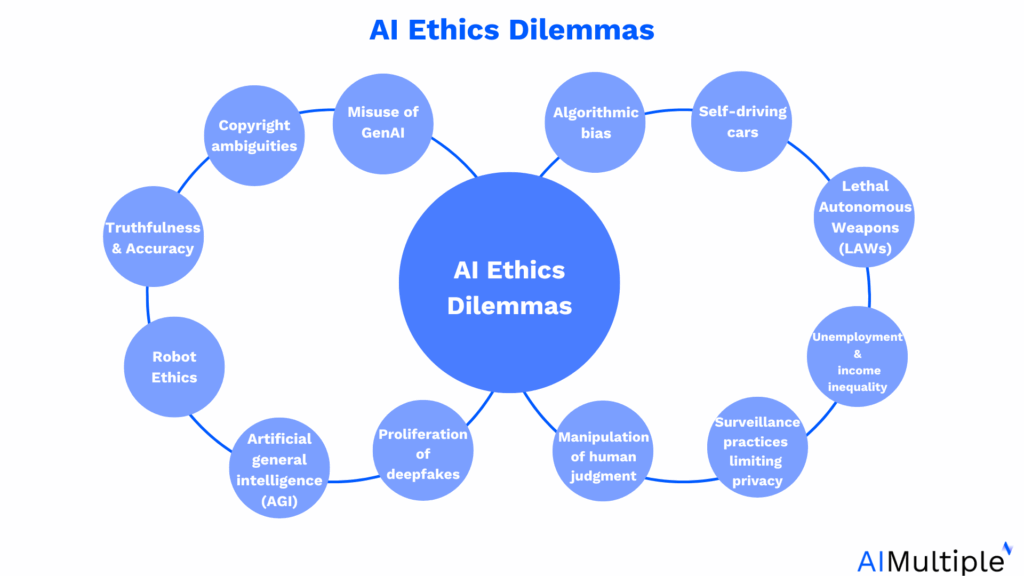

Ethical Challenges in the Age of AI and LLMs: What We Must Address Now

The rapid rise of AI and Large Language Models (LLMs) is reshaping industries, but it also brings ethical questions that society must confront now. As these models influence how we communicate, learn, and make decisions, ensuring their responsible use is critical.

Bias and Fairness

LLMs are trained on massive datasets scraped from the internet, which means they can inherit societal biases — racial, gender-based, or ideological. If left unchecked, these biases can perpetuate discrimination in hiring tools, loan approvals, and even legal recommendations.

Solution: Regular audits, inclusive training data, and bias-detection tools must be part of the development lifecycle.

Data Privacy

LLMs sometimes inadvertently output private or sensitive data that was included in their training sets. For example, personal phone numbers, addresses, or confidential company information may surface in generated content.

Solution: Techniques like differential privacy, data redaction, and stricter dataset curation can mitigate this risk.

Misinformation and Deepfakes

LLMs can generate fake news articles, impersonate individuals, or create synthetic media that appears real. The potential for misinformation, especially in political and social contexts, is enormous.

Solution: Watermarking AI-generated content and developing verification tools can help users distinguish between real and synthetic content.

Job Displacement

AI tools are automating tasks previously done by humans — from customer service and content writing to data analysis. While this boosts productivity, it also threatens livelihoods.

Solution: Invest in reskilling programs and emphasize human-AI collaboration over replacement.

Lack of Accountability

When an AI makes a wrong decision — such as denying a loan or misdiagnosing an illness — who is responsible? The developer? The user? This grey area needs urgent clarification.

Solution: Establish legal frameworks and model accountability guidelines to determine who answers for AI errors.

Final Thoughts

AI and LLMs are powerful tools with the potential to do great good — or harm. By proactively addressing these ethical challenges, we can steer their development in a direction that benefits everyone, not just a select few.